How Notion evaluates AI at scale across 70 engineers

With Sarah Sachs, AI Modeling Lead

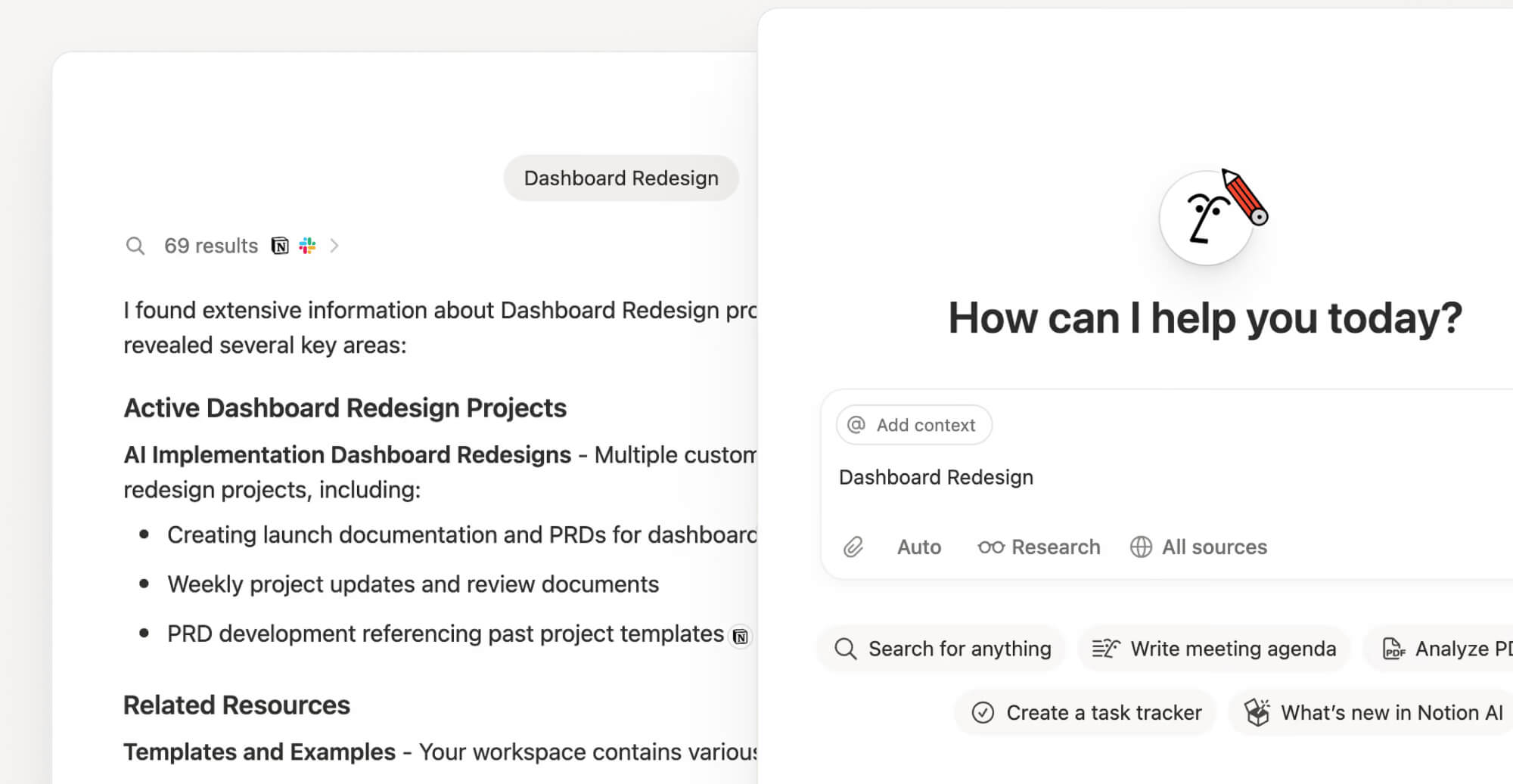

Notion is the connected workspace where teams bring knowledge and work together—so they can write, plan, organize, and find answers with AI.

Co-founders Ivan Zhao and Simon Last were early in recognizing the potential of generative AI and started experimenting with large language models (LLMs) soon after GPT-2 launched in 2019. Since then, they've built Notion AI around a few core capabilities: finding answers across company knowledge, drafting and refining content, summarizing work into clear next steps, and automating repetitive workflows.

Today, Notion is moving beyond assisting to actively doing valuable work on behalf of its customers, and Braintrust is a core part of their AI stack. Sarah Sachs, who leads Notion's AI modeling teams, has evolved Notion's evaluation practices from simple prompt-and-judge setups to a comprehensive framework that keeps 70 engineers aligned while deploying frontier models within hours of release.

Sarah's first task when joining Notion two years ago was to sit down in Braintrust and look at the worst customer experiences. Understanding where people were not happy, and how the team iterated on quality, set the foundation for everything that followed.

I first started working with Braintrust on my first day at Notion. I sat down in Braintrust and looked at some of the worst experiences our customers had and tried to understand how we can be better.

The evolution from simple evals to agentic evaluation

In 2023, Notion's AI evaluations were straightforward: one prompt, maybe two chained together, with a simple LLM judge and expected output. Today, agents are doing path-finding, adjusting based on their own results, and navigating a huge number of possible evaluation paths.

Today, our agents are doing a lot more path finding. They're finding different feedback from their own results and adjusting what they do, and that's kind of a combinatorial explosion in evaluation.

Notion has created evaluations to measure these new agents. When operating with 70 engineers at scale, vibe checks no longer work. Now, 80% of what the AI team does is based on evaluating from feedback and traces in Braintrust; tinkering, measuring, and understanding if they are moving in the right direction.

Deploying frontier models in hours, not weeks

One of Notion's AI commitments is giving customers access to the latest models as quickly as possible, ideally within hours of release. This requires both rigorous evals, and a team that can move rapidly from evaluation to production.

We've gotten a really good muscle, and I think everybody loves when a new model comes out because it's just a rush. And we have a muscle for doing it and we know what it's like and we just love experimenting and seeing what's new.

Notion runs evals that catch regressions, and evals that measure frontier performance. When a new frontier model comes out, it might pass a regression eval at 100%, similar to the last model. This gives a good sense of broad performance, but may not be fine-grained enough for implementation in Notion's product. To understand how a new model functions differently than prior models, Notion runs a frontier eval that can immediately identify what it does differently, so they can start deploying it for those specific use cases.

But evals alone aren't enough. Notion's teams are designed to move quickly. Many of the latest model releases are more than just new functionality in the same API structure. They're introducing new concepts of thinking, and have different parameters teams can play with. With Braintrust, Notion's engineers can quickly restructure evaluation methodologies for a new model so they can tinker quickly and understand what's working.

Finding needle-in-a-haystack problems

At Notion's scale, AI is being applied in myriad ways, which means the hardest challenge isn't correcting an error, but finding it in the first place amidst all the data. Before Braintrust, these problems went unidentified, but now Sarah and team can find them.

One example is Notion's multilingual workspaces. Customers might be working in Japanese, Korean, and English, all in the same workspace, and it can be difficult for AI to figure out what language it should be using. In a product used by so many people, across many languages, this sort of problem could go unnoticed.

Using Braintrust, Notion is able to find the needle-in-a-haystack problems that might not happen at scale for every customer, but which are high priority for a subset of customers. With Braintrust's search functionality and evaluation mechanisms, Notion can create a dataset of all the times in which language adherence failed to meet expectations. And then they can use LLMs as a judge to ensure that Notion AI never regresses on that in the future.

I would say for some of our APAC customers, where they rely on multilingual capabilities, that eval alone was probably one of the top improvements that they've had in quality in the past year.

Customization for Notion-specific needs

Notion's data has unique structure and requirements that off-the-shelf evaluation tools cannot address. For Notion, Braintrust is perfect because it's not too opinionated. They can start with a template and figure out how to represent their data in the best way possible.

Notion does a lot of unorthodox things, given its horizontal use case and the wide variety of data handled by its platform. To look at that data properly, Notion uses Braintrust to deploy custom code. For example, they use an iframe component in the bottom right of the evaluation screen, then have their data specialist work on prompt engineering the tools they need to look at the data, label it, and add it to the right evaluation set.

Being able to add custom code on top of Braintrust means they can execute even faster. Notion can act as if they have an entire engineering team building the infrastructure of their evaluation, then use a coding agent to add the extra polish that makes it feel like custom Notion software.

We know our data is special and we know that we have a lot of opinions on how things are structured. Being able to add custom code on top of Braintrust to make it so that we can execute even faster as a team for our own needs, it's the best of both worlds.

Building search infrastructure for LLM traces

As Notion's AI prompts grew from thousands of tokens to hundreds of thousands, the team needed to search not just customer interactions but specific tool calls, error codes, and patterns within massive traces. Standard search was too slow.

The needs of Notion, and their conversations with Braintrust's CEO, were part of why Braintrust built and shipped Brainstore, a database purpose-built for LLM traces. Notion was one of the first adopters.

Brainstore features a search indexing infrastructure built for LLM traces, for large context, and for the type of searches that developers want to do. For Notion, it's often not about semantic meaning, but the exploration of very specific items in their customer data. And they need it performantly, at scale.

The way that Notion builds is primarily based on observability and using evals to understand the problems they need to address for customers. So when their existing databases started breaking, it became a problem. They depend on observability through Braintrust, and Brainstore's speed makes them better at serving their own customers.

The way that Braintrust made us feel when they built Brainstore is how we aim to make Notion customers feel when we build them AI products. We should be sitting with them, understanding what they need, and acting quickly so they don't feel like they're waiting.

The willingness to rebuild

As Sarah thinks about what's next for Notion, and the industry, she has this advice for AI engineering teams: be willing to start over every six months. The models available today are fundamentally different from 12 months ago, and building great products around the strengths of these new capabilities requires rethinking how systems are built.

When models came out that could self-heal on their own tool results, we rebuilt Notion AI, and it was a no-brainer because in order to play to the strengths of these frontier reasoning models, you had to prompt differently. No one likes refactoring. But embracing it as something that enables even more, you have to do it.

Key takeaways

- Build both regression and frontier evals. Regression evals catch breakage, but frontier evals reveal where new models genuinely improve, enabling faster deployment.

- Look for needle-in-a-haystack problems. Some issues affect a small but important subset of customers. Searchable traces and targeted evaluation datasets surface what broad metrics miss.

- Invest in customizable evaluation infrastructure. When your data has unique structure, the ability to deploy custom evaluation code on top of your platform accelerates the entire team.

- Be willing to rebuild. The best AI teams embrace the six-month rebuild cycle, restructuring systems to play to the strengths of new model capabilities.

Thank you to Sarah for sharing Notion's story.

Scale AI evaluation across your team

Learn how Braintrust helps teams like Notion deploy frontier models within hours, find needle-in-a-haystack problems, and keep 70 engineers aligned on evaluation best practices.

Read more customer stories

“What we're often missing is, what was the model thinking? That's where Braintrust comes in.”

How Dropbox built an evaluation pipeline for AI search

“Eval-driven development is the new test-driven development. Any projects that we take up, the first step is identifying the eval set.”